POWER THAT IS PLACED IN OUR LAPS

There are common features in Laptops that are perhaps under estimated. Being able to

both record and playback audio is one of them. Depending on the type of computer,

there are usually resources as to how to do this. And some of these sources like

"Audacity" provide the source code as well!

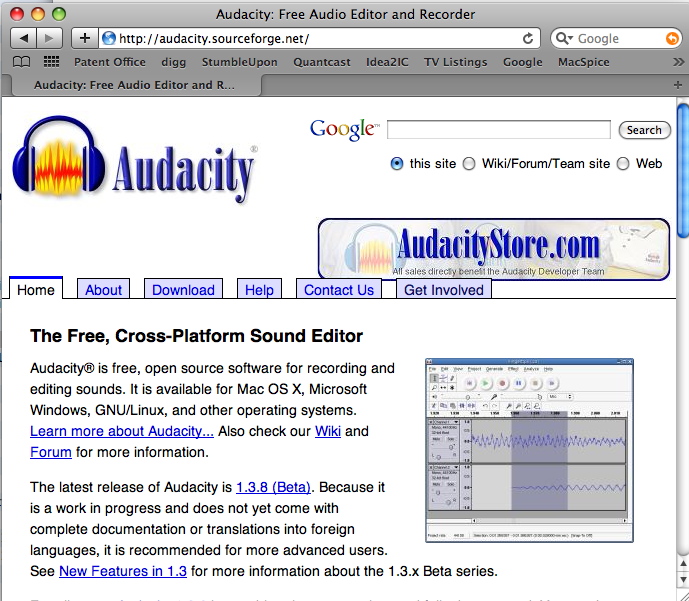

On a MacBook Pro, there is an audio output port and an audio input port which is commonly

referred to as the "Line In" port. A 40 year old RCA condenser microphone was used to

record the word "Hello" in the example below.

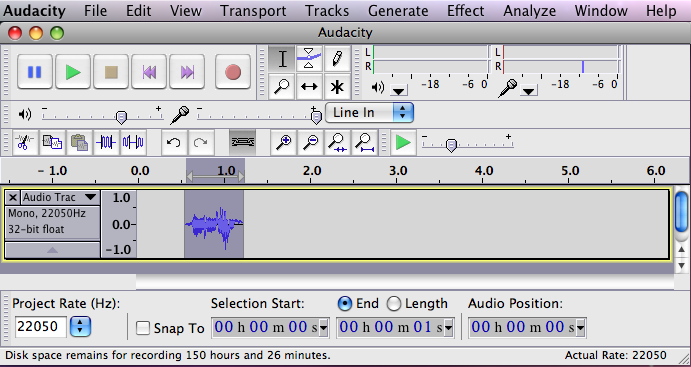

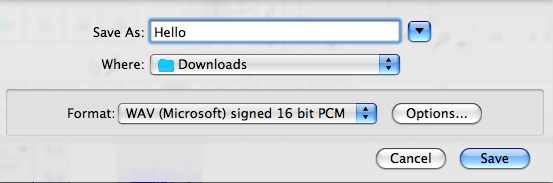

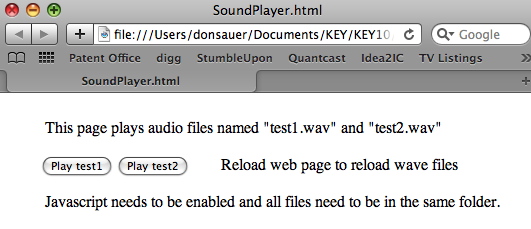

The sound was then exported to a common audio file format. There are a large number

of alternative ways to play the sound. In fact a web page can play the sound file.

This can come in handy, and it will be shown later.

An example sound playing web page is found here and a compressed directory

containing source code can be found here.

=============A_Useful_Signal_Digitizer==============================

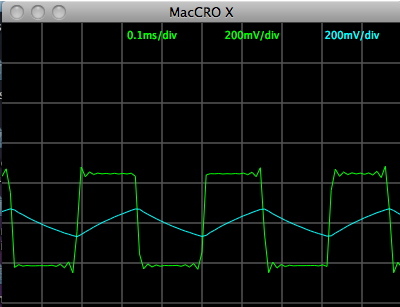

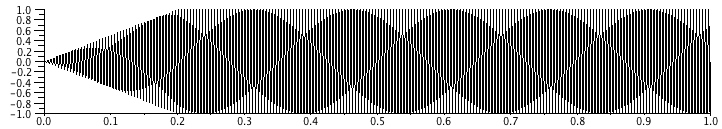

What may not be fully appreciated is the fact that stereo audio frequency signal is being

digitized to be able to do this. The sampling rate on a Mac using Audicity can reach as high as

96KHz and as low as 8kHz. The data is typically stored as 16bits. A lot of code has been written

and is available on the web which can effectively display incoming audio signal in a dual trace

oscilloscope mode as is shown below. More detail can be found here and here.

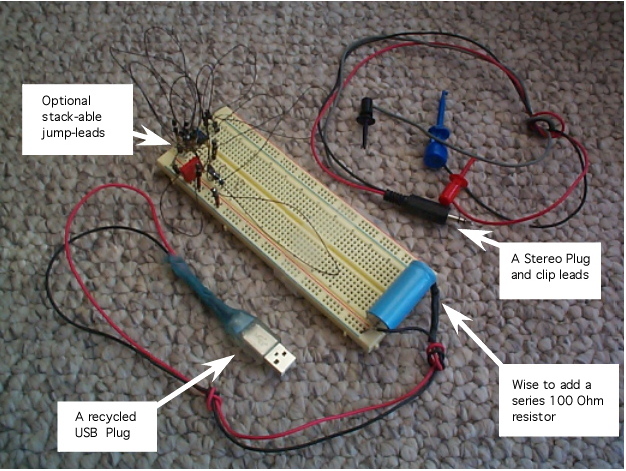

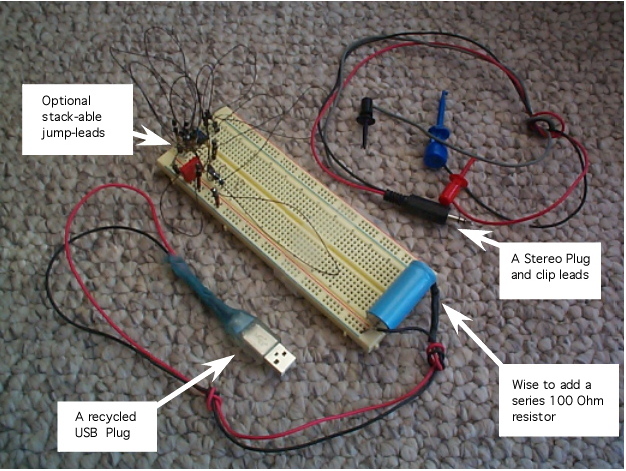

But the USB port provides a 5 volt supply at 500mA. With a little cable interface

construction, now a circuit on a solder-less breadboard can be powered up and have

two of its analog waveforms displayed in scope format on the same laptop.

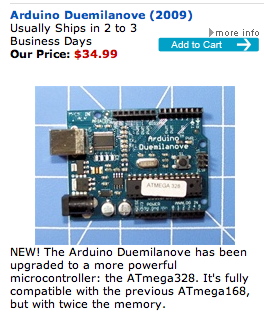

To make things even more fun, an Arduino microcontroller has a full USB interface,

and provides an easier access to the 5 volt supply.

Seeing how easy it is to control the Arduino, the temptation to build an analog

breadboard which is both laptop controlled and displayed at the same time is irresistible.

My next breadboard might contain an Arduino controlled LM13600 circuit.

=============Acess_To_All_Data==================================

Another powerful resource has become available on the web which addresses

what used to be a bottle neck.

An audio file which is sampling at 22050 samples per second, can create a huge text file

in no time. To save file size, the data is stored as binary. This does not make it easy

to get at all the sample data and do things with it. The "SciLab" program can now make

that easy. The process involves copying and pasting lines of code into a SciLab window.

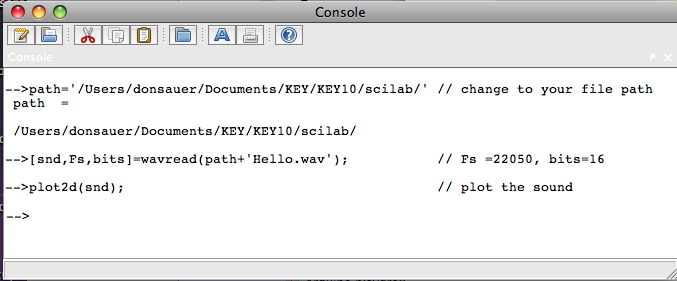

path='/Users/donsauer/Documents/KEY/KEY10/scilab/' // change to your file path

[snd,Fs,bits]=wavread(path+'Hello.wav'); // Fs =22050, bits=16

plot2d(snd); // plot the sound

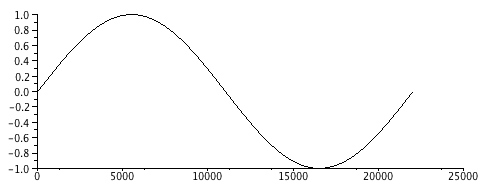

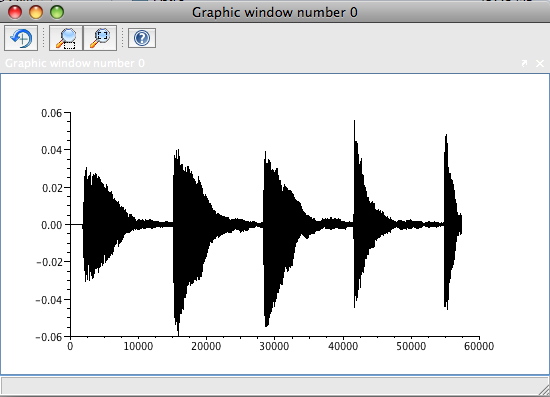

In the example above, the audio file "Hello.wav" is downloaded and placed in the

scilab directory. The full path to this directory needs to be set correctly.

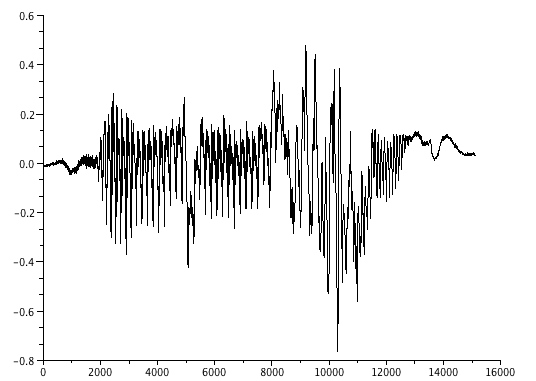

The window results and its graph are shown below.

The wavread() function is set up to read the bits (bits) and sample rate (Fs) along with

reading in the data points (snd). The data is read into the one dimension array "snd".

It is plotted as values versus the index of the array.

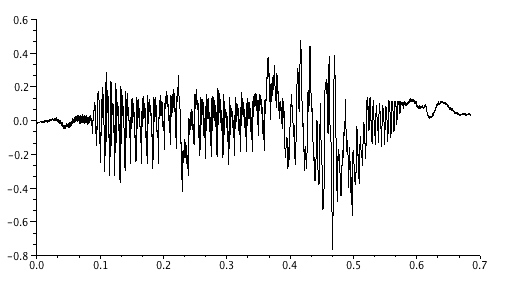

N= size(snd,'*') // N= 15104

TT=(N-1)/Fs // total time TT = 0.6849433

t = 0:1/Fs:TT; // create a time array 15104 points

clf(); // Clear graph

plot2d(t,snd); // plot as x/y plot

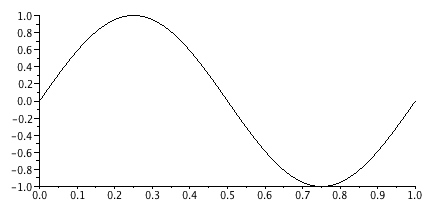

The size() function reads the size to the snd() array, and a time or t() array is

constructed to have the same number of points. Now the array snd() can be plotted versus

time as a x/y plot.

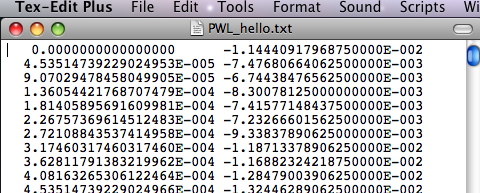

The snd() array can be printed out in a text file. But it may be easier to view the

data in a two column format which shows time first and then value. The following

create the two column array x() and writes it out as a text file.

for i=1:N; // starts the for loop

x(i,1)=t(i); // two col array time first

x(i,2)=snd(i); // two col array sound second

end; // ends the for loop

write( path+ 'PWL_hello.txt' ,x); // write to text file

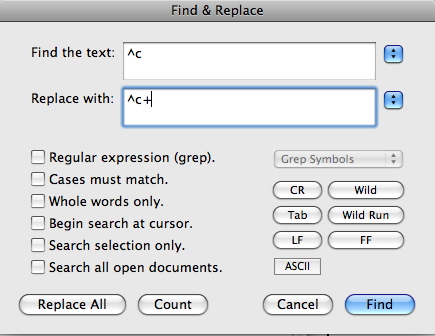

The waveform is now readable in a more convenient format. With a little text

editor magic, this same file can be converted into something that can be imported

to spice.

Do a little find and replace all..

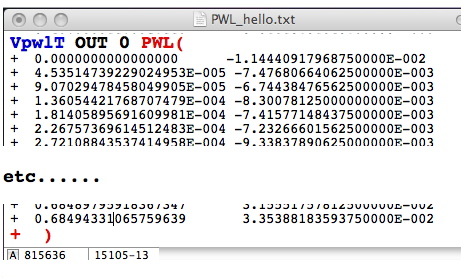

Add a little more formating..

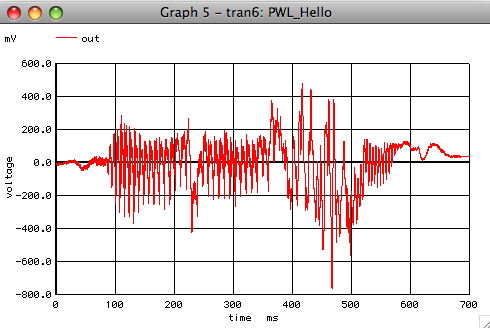

And it can be imported into spice as such

PWL_Hello

*

** OUT Rload

* _____/\ __

* _|_ \/ |

* / \ |

* /VpwlT\ |

* \ / _|_

* \___/ ///

* | Gnd

* _|_

* ///

* Gnd

*

.OPTIONS GMIN =1e-18 METHOD =trap

Rload OUT 0 1k

.include PWL_hello.txt

.tran 50u 700m 0 500m

.control

run

plot out

.endc

.end

The important thing is that an audio file can be read straight into a

array where it is easy to get at. And multi dimensional arrays can be

created and exported into an audio file format or to a text file format.

=============AB_Listening_Experiments==================================

Seeing a waveform and hearing it are to different things. First off, the

scilab term for Pi is denoted as %pi as shown the the example below.

t=soundsec(1); // t = 1 second at 22050

[ncol,nrow]=size(t); // ncol=1 nrow=11025.

s=sin(2*%pi*t); // create 1Hz tone

plot2d(s); // plot s vs index

Wave forms can be viewed versus index or time as desired.

clf(); // Clear graph

plot2d(t,s); // plot s vs t

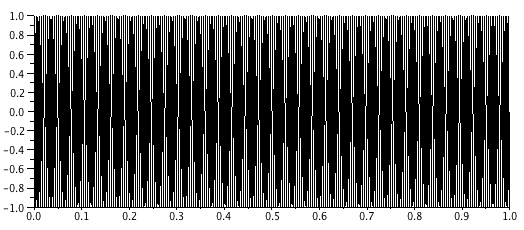

Most audio frequency signals are hard to see over a one second period.

t=soundsec(1); // t = 1 second at 22050

s=sin(2*%pi*220*t); // create Note A

plot2d(t,s); // plot a whole second

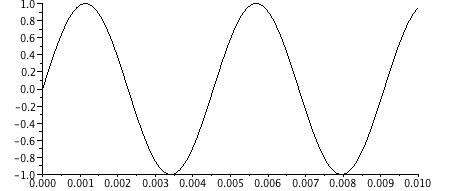

A portion of the waveform can be viewed instead.

clf(); // Clear graph

plot2d(t,s, rect=[0,-1,.01,1]); // plots 10ms period

Now on to hearing the waveform.

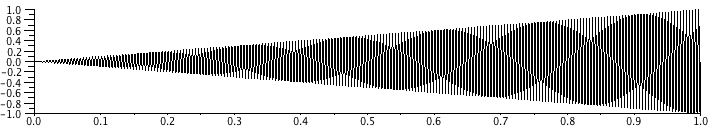

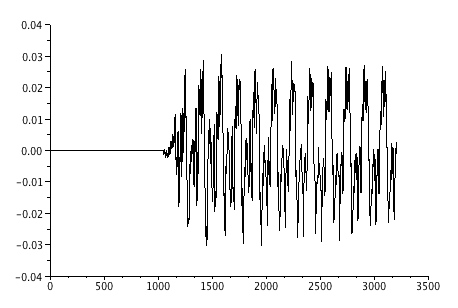

=============Make_Two_Waveforms==================================

When listening for something, it is often convenient to have a reference sound

to make comparisons. In the example below, rise time will be made to vary

between two waveforms.

path='/Users/donsauer/Documents/KEY/KEY10/scilab/'

t=soundsec(1); // t = 1 second at 22050

[ncol,nrow]=size(t); // ncol=1 nrow =22050.

g=t; // create g as large as t

g(1:nrow) =1; // set g to any value

g(1:nrow/5) =5*t(1:nrow/5); // define a gain ramp

s=sin(2*%pi*220*t); // create A Note

for i=1:nrow, s2(i)=g(i)*s(i) ; end; // apply gain to sound

clf(); // clear graph

plot2d(t,s2); // plot sound vs time

savewave( path+'test1.wav',s2); // save s2 as a wave file

g(1:nrow) =1; // set g to any value

g(1:nrow/1) =1*t(1:nrow/1); // define a gain ramp

s=sin(2*%pi*220*t); // create A Note

for i=1:nrow, s2(i)=g(i)*s(i) ; end; // apply gain to sound

clf(); // clear graph

plot2d(t,s2); // plot sound vs time

savewave( path+'test2.wav',s2); // save s2 as a wave file

This is where having a web page that plays audio comes in handy. The code to do this

<>is quite simple and can be listen to here and the source can be downloaded here. Once the scilab program runscan be heard.

Being able to try something, and then both see and hear the results goes a long way.

But there is no reason why hearing experiments cannot be done on an analog breadboard

which is connected to a laptop.

=============Why_Do_It_All_Digitally?============================

A laptop can power up and with the right software view the waveforms coming

from an analog bread board. For the case of a MacBook Pro, listening to the waveforms

is easy as well.

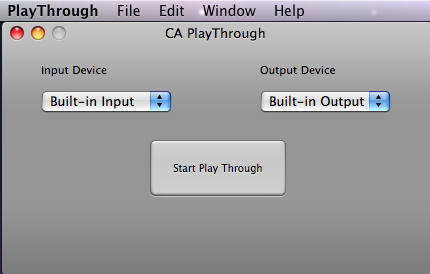

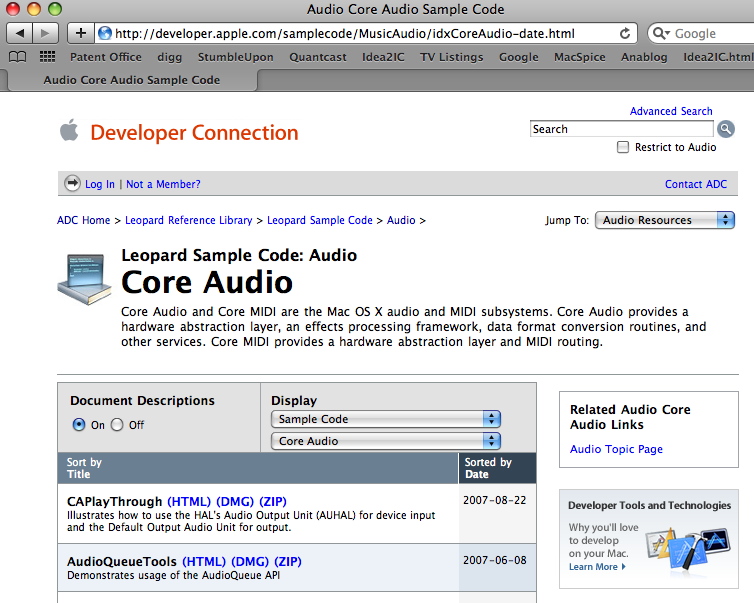

Apple provides working sample code on how to access audio hardware resources

within a Mac. For now, this sample code can hook up what is coming into the

"LineInput" directly to the MacBook's speakers. But if one really want to get into it,

everything in this code is in place if one want to do "other" audio things.

The Apple site is provided below. A pre-compiled application comes with the

project. The project is compiled in Xcode. Want to learn how to fully program

both hardware and software in a computer? Its all on line.

=============Some_LM13600_Background============================

The LM13600/LM13700 was designed for the organ market place when everything was done using

analog circuitry. There appears to be a strong web following of people having a passion

for doing things like Synth-DIY and Analog Synthesizer using analog circuity.

It certainly is quite fun doing it all using OP Amps and OTAs. The following web sites

list some of the things happening in his area of analog.

http://members.cox.net/barryklein/

http://www.musicfromouterspace.com/

http://www.musicfromouterspace.com/analogsynth/synthdiy_links.html

So how can sound be modulated to sound like anything? Say one wanted to make an

organ sound like a piano or a trumpet. A sine wave at the correct frequency needed

to be modulated in the right way. And then the rise time and fall time of the signal

needs to be set to something we all recognize. Amazingly ,this know how on how to

do this already comes pre-installed inside many laptops to allow them to play midi files.

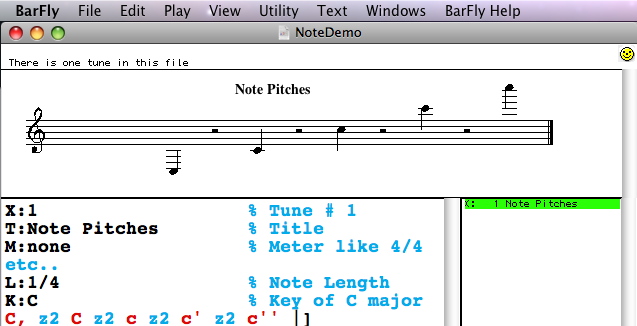

To see this, one can fire up a shareware program like "BarFly"

BarFly uses the ABC Music standard to both display and play music. This format

is all text and is easy to read. For this song example, the note C is played over

five octaves.

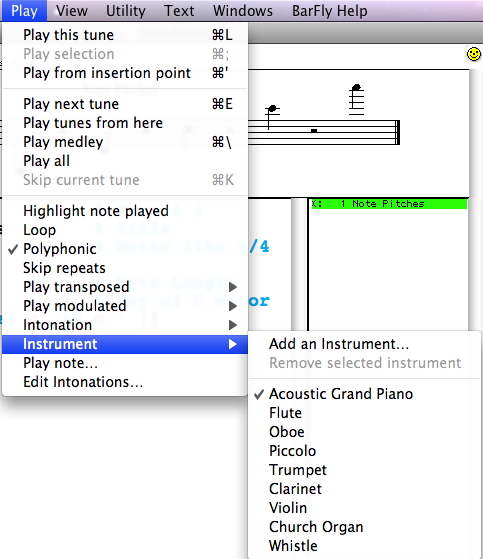

A choice of instruments to play comes as a standard feature.

That which is contained in the "Default Synthesizer" apparently comes

already installed within the Mac to play midi files.

The five piano notes can be exported to an audio file format and played

using a web page. One can listen to them here.

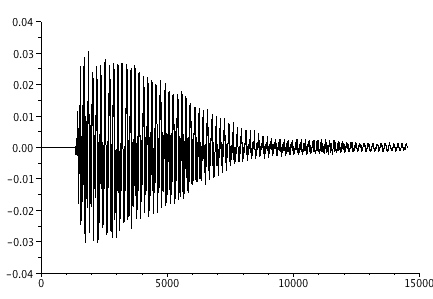

The five piano notes can also be imported into scilab. Now its possible to

see things like attack and decay. For that manner, since the data is

read into arrays, waveforms can be modified and listen to. Using analog

circuitry, this modulation can all be done using LM13700s while listening

to the results. Being able to both see and hear things goes a long way.

Does the rise and decay of a note needs to be a certain way to sound

like a piano. What would it take to make it not sound like a piano?

That is why having full modulation access to the waveform is convenient.

And the distortion of the waveform is also something we recognize hearing.

Different instruments will look differently. And Scilab has the ability to

do complex fft. One can think of all kinds of experiments that could be

performed on the harmonics. So what does it take to make a sound no longer

sound like a piano or trumpet? One no longer has to to record sounds off

of different instruments to see how they are modulated differently. This

know how is already built into any computer that can play midi files. But

instrument recording can still be done on the laptop if so desire.

For what its worth, technology today appears to be coming to us, and laying

some incredible things literally down in our laps.

8/28/9 dsauersanjose@aol.com